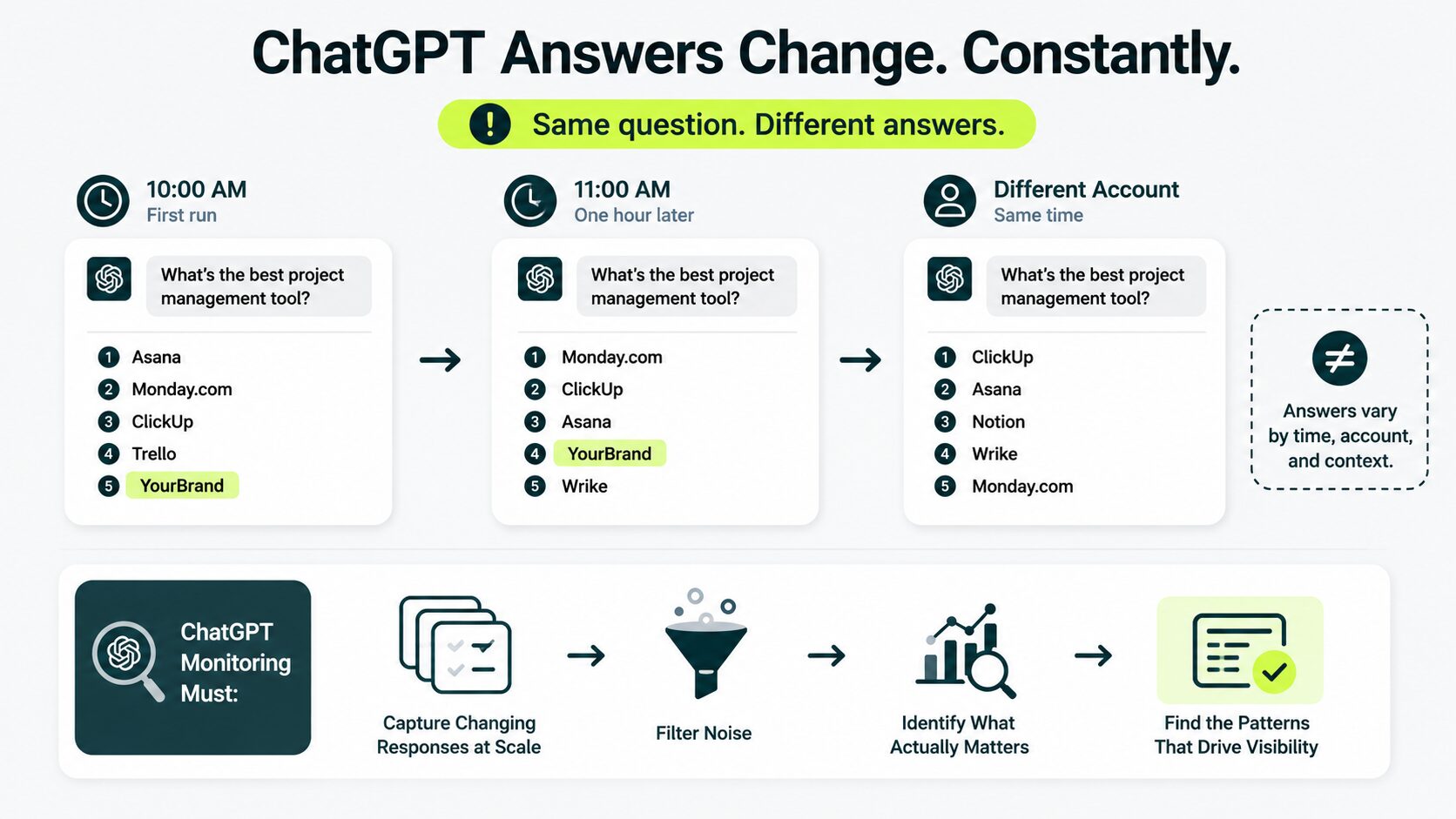

A buyer asks ChatGPT for the best tool in your category. The answer shows five brands. Yours might appear in the list. Run the same prompt again an hour later, and the answer changes. Try it from a different account, and it changes again. That’s the core problem with ChatGPT monitoring.

Monitoring brand mentions in ChatGPT means capturing changing responses at scale, filtering noise, and identifying the patterns that actually matter.

Key Takeaways:

- ChatGPT answers jump around. Run the same prompt twice and you’ll almost never get the same result. Research shows you’ve less than a 1% chance of seeing the same brand twice.

- Mentions, citations, and source pulls are three different things. Track them separately, or you’ll mismeasure your visibility.

- A manual baseline audit gives you a reliable starting point before automation becomes necessary.

- The metric that matters most is the visibility percentage across many runs, not ‘ranking position’ within a single answer.

- What ChatGPT says about your brand usually reflects what the broader web says about your brand.

Why ChatGPT Monitoring Doesn’t Work Like Google Tracking

ChatGPT answers are probabilistic, which means its answers depend on probability, so the same prompt produces a different brand list almost every time you run it.

ChatGPT has no traditional SERP layout, no fixed positions, and no consistent answer. This changes everything. On Google, one search gives you one spot, and you can climb with effort. On ChatGPT, one search gives you one answer, and the next person might not see you at all.

Research from SparkToro tested this directly. Participants repeatedly ran the same brand recommendation prompt through ChatGPT, Claude, and Google’s AI system. There’s a less than 1-in-100 chance that ChatGPT or Google’s AI returns the exact same brand list twice for the same prompt. Same wording. Same account. Different answers.

That has three direct consequences for how you set up monitoring:

1) A single prompt run is not data

It’s an anecdote. To say anything reliable, you need many runs of the same prompt over time.

2) “Ranking” is the wrong frame

There is no #1 spot. The metric that matters is the visibility percentage across many runs. What share of responses include your brand at all?

3) Variance itself is information

If a brand appears in 80 of 100 runs, the market has structurally embedded it. A brand that appears in 8 of 100 runs is on the edge, and that edge moves week to week.

Most teams miss this. They check one prompt a month, see the answer change, and panic. That’s not real movement. That’s just noise.

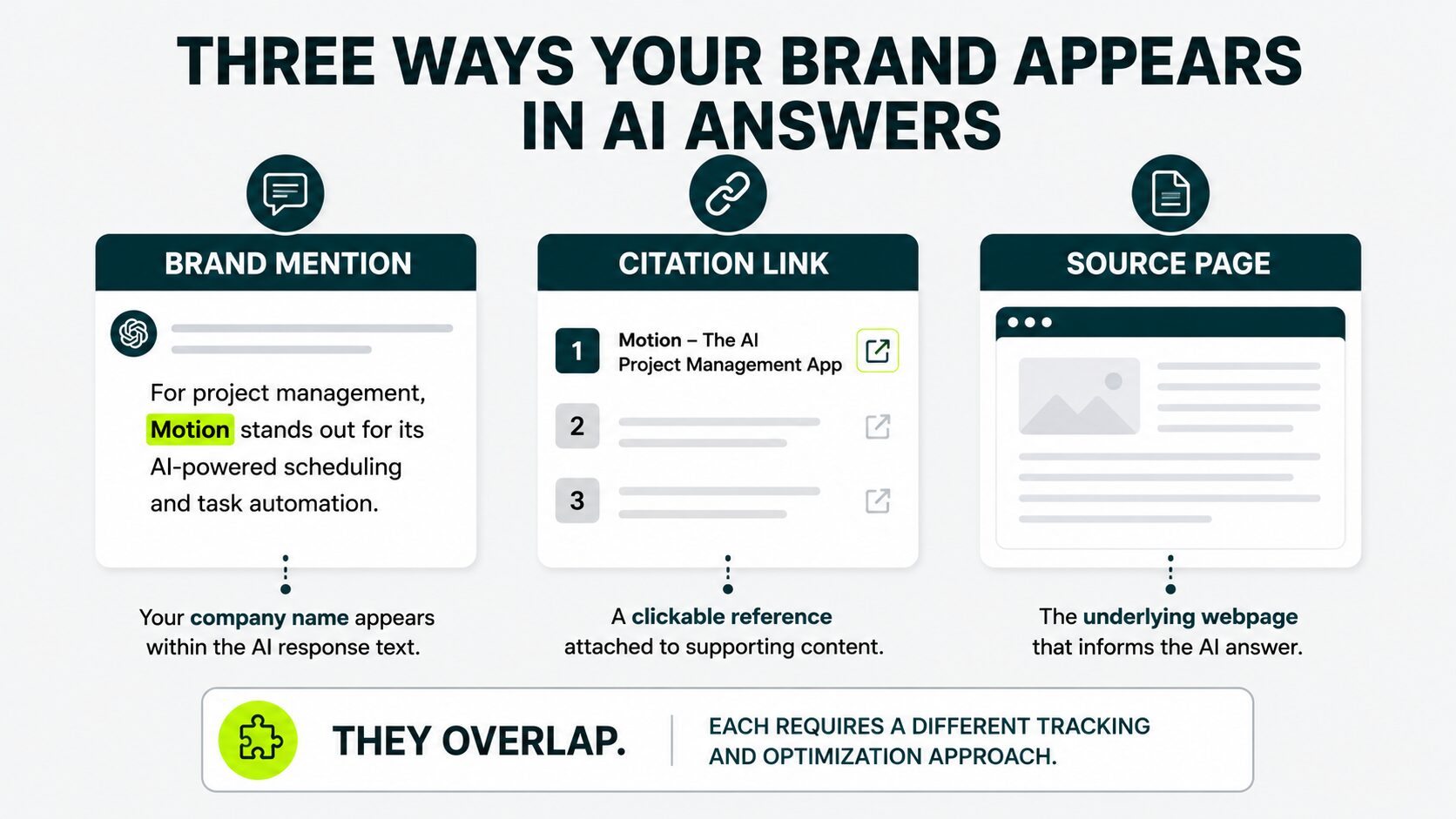

Mentions, Citations, and Sources Are Three Different Things

A brand mention is when your company name appears within the ChatGPT response text. A citation link is a clickable reference attached to supporting content. A source page is the underlying webpage that informs the answer. These signals overlap, but each requires a different tracking and optimization approach.

Before measuring anything, separate the different signals inside a ChatGPT response. They often look similar on the surface, but they behave very differently in your strategy.

Brand Mention

A brand mention happens when someone mentions your company name, which appears directly inside the response text.

For example:“

For B2B SaaS, tools like Acme and Beta are commonly recommended.”

In this case, Acme is being mentioned as part of the answer itself. This is what users actually read first.

Citation Link

A citation is the linked reference ChatGPT sometimes attaches to supporting content. These usually appear as:

- Numbered links

- Sidebar references

- Source citations

Mentions and citations do not always overlap. ChatGPT may cite your website even when it never appears in the answer text.

Source Page

A source page is the underlying webpage that ChatGPT used to generate the response. Sometimes those pages are visible to users. Sometimes they are not.

The source is the input. The generated answer is the output. That distinction matters.

The strategic implication:

Each visibility problem points to a different underlying issue.

If your brand is missing from in-text mentions, the fix is usually your footprints in third-party sources, such as Industry comparisons, brand mentions in publications, and review content

If ChatGPT mentions your brand but skips citations, you should strengthen your core SEO fundamentals. Make your pages clearer, more authoritative, and easier to extract. Working with a dedicated outreach team can compress this process from months to weeks.

These are different problems, and they require different fixes. Teams that combine all three signals into one metric usually spend months optimizing the wrong thing.

Designing a Prompt Set That’s Actually Worth Tracking

A useful prompt set usually includes four categories: direct brand prompts, category discovery prompts, competitor comparison prompts, and use case prompts.

Most teams’ tracking fails because their prompts are wrong. Teams often track prompts like:

“What does ChatGPT say about our brand?” That tells you very little.

Direct brand prompts often include the brand itself. The visibility that actually matters comes in the buyer journey before the customer already knows your company exists. That’s the visibility you need to measure.

Build your monitoring prompts across four categories. Each one answers a different strategic question.

Direct Brand Prompts

Examples:

- “Tell me about [brand].”

- “Is [brand] a good [category] tool?”

These prompts reveal how ChatGPT describes your company. Here you need to look for:

- Outdated positioning

- Incorrect features

- Weak descriptions

- Negative framing

Variance is usually lower here. The bigger risk is inaccurate representation, not invisibility.

Category Discovery Prompts

Examples:

- “What are the best [category] tools for [persona]?”

- “Recommend [category] software for a [industry] company.”

These are the highest-value prompts in most categories. They reflect how buyers start researching solutions before they know specific brands.

Your visibility percentage here acts like an AI-era market share signal. If your brand is missing consistently, competitors are owning the discovery layer.

Comparison Prompts

Examples:

- “[Brand] vs [Competitor]”

- “Alternatives to [Established Competitor]”

These reveal whether ChatGPT positions you alongside the incumbents or skips you entirely when listing options.

Use Case Prompts

Examples:

- “What’s the best tool for managing remote onboarding?”

- “Best software to automate customer reporting?”

These prompts focus on problems instead of categories. That matters.

Users who ask use-case questions often have no loyalty to any existing brand. If your company appears consistently here, ChatGPT is more likely to surface you as a practical solution.

A working prompt set has 12-25 prompts total, weighted heavily toward category discovery and use cases. Direct brand prompts are diagnostic, not strategic. If you only track those, you’ll think you’re winning when you’re invisible to anyone who doesn’t already know your name.

In most client audits we’ve run, category discovery prompts expose the biggest visibility gaps. One cybersecurity SaaS client consistently appeared in branded searches. But disappeared entirely when prompted with “best endpoint security tools for remote teams.”

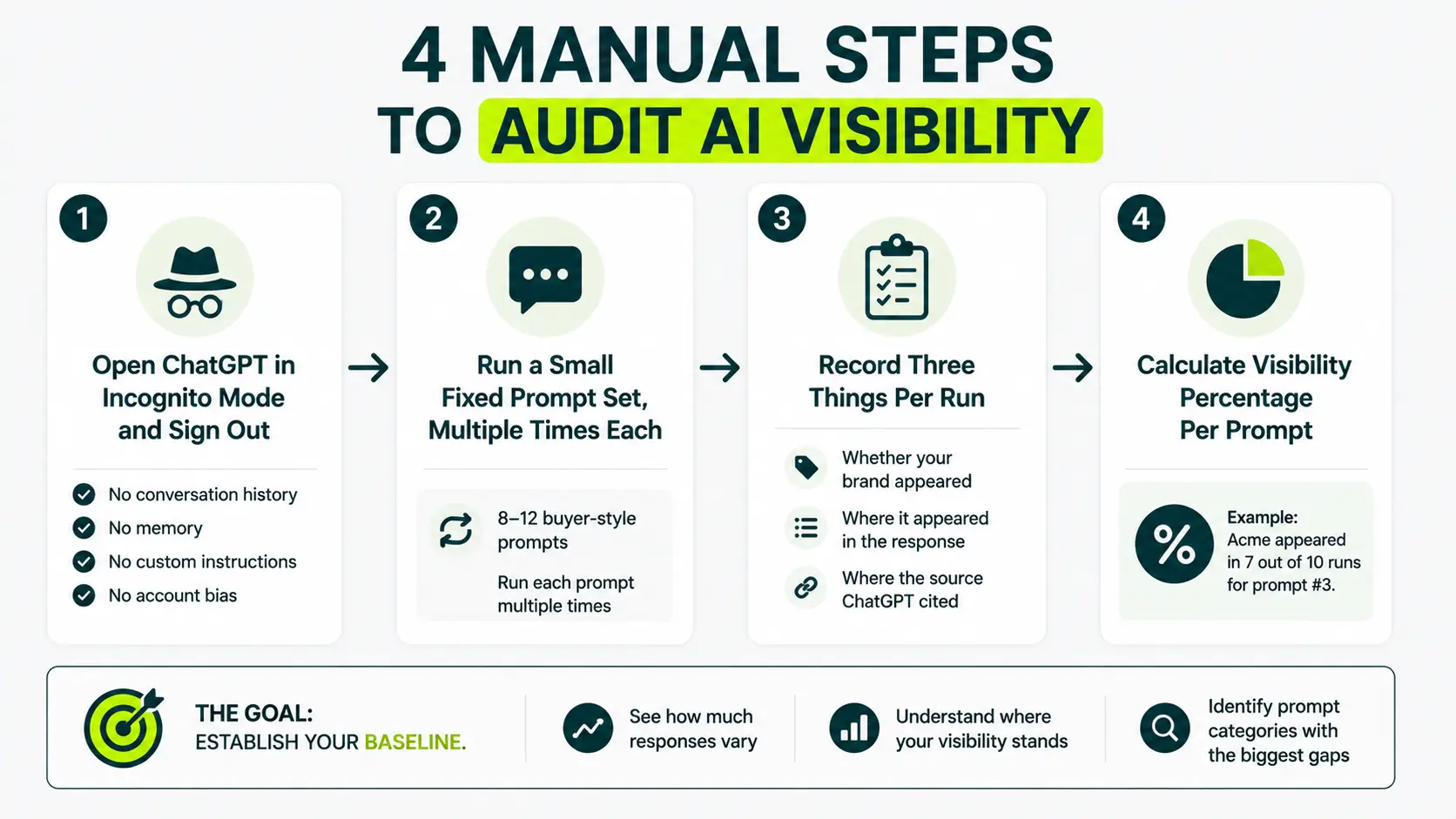

The Manual Audit That Sets Your Baseline

Start by running a manual baseline audit before using any tracking tool.

Open ChatGPT, sign out and back in incognito mode, and test a fixed set of prompts multiple times.

Record whether your brand appeared and which sources were cited, then calculate visibility percentages per prompt category to create a reliable benchmark.

This will cost you nothing, takes a few hours, and gives you a reliable starting point for every future comparison. Most teams skip this step. That usually leads to poor measurement later.

The audit has four steps:

1) Open ChatGPT in Incognito Mode and Sign Out

ChatGPT personalizes responses based on your:

- Conversation history

- Memory

- Custom instructions

- Account behavior

If you test from your regular account, you may only be seeing how ChatGPT responds to you, not what it shows your prospects. A fresh, signed-out session removes that bias.

2) Run a Small Fixed Prompt Set, Multiple Times Each

Use 8-12 prompts that reflect how real buyers search in your category. Run each prompt multiple times. Yes, it’s repetitive. Do it anyway. Watching the variance yourself teaches you more than most dashboards will.

3) Record Three Things Per Run

For every response, track:

- Whether your brand appeared

- Where it appeared in the response

- Where the source ChatGPT cited

A spreadsheet is enough for the first audit. You do not need expensive tools to begin.

4) Calculate Visibility Percentage Per Prompt

This becomes your baseline metric. For example,

“Acme appeared in 7 out of 10 runs for prompt #3.”

That gives you a visibility percentage for the prompt. Once you aggregate all prompts together, you get a broader visibility score across the category.

In our experience with clients, when we ran 12 prompt baselines for a mid-market SaaS client last quarter, their visibility on direct brand prompts was 92%, but on category prompts (‘best [category] for [persona]’) it sat at 31%. The gap was the entire problem.

A manual audit is not a long-term system. It’s the calibration step. After one full pass, you understand:

- How many responses vary

- Where your visibility actually stands

- Which prompt categories expose the biggest gaps

Without that baseline, later trend data becomes much harder to interpret.

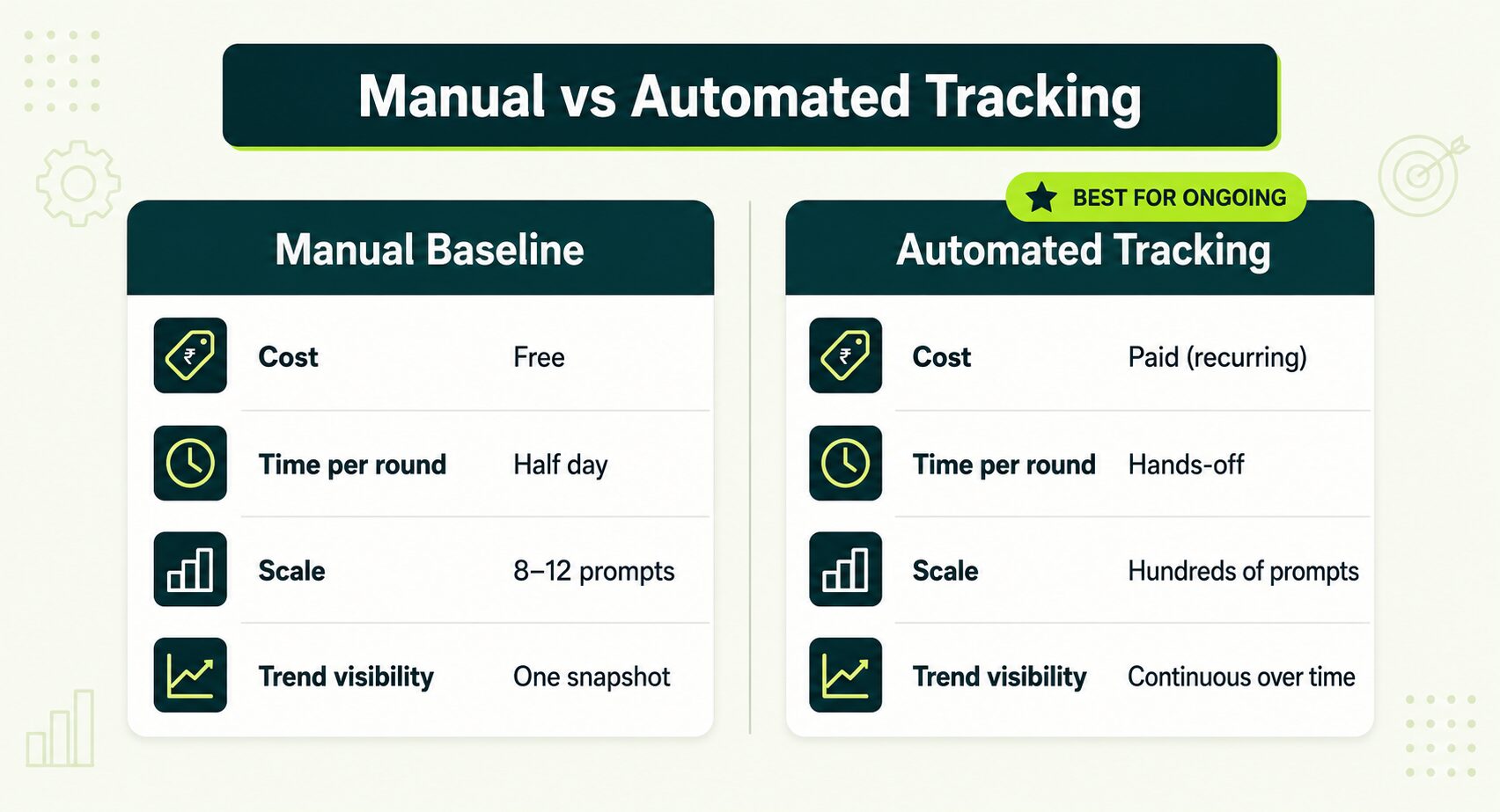

Choosing a Tool When Manual Stops Scaling

Manual audits establish the baseline. They do not scale well for ongoing monitoring. Once you start tracking dozens of prompts weekly, automation becomes necessary. That usually means selecting a dedicated tracking platform. The category has evolved quickly, and most tools now fall into three broad groups.

Dedicated AI Visibility Platforms

Built specifically for tracking brand visibility across AI search platforms such as ChatGPT, Perplexity, Gemini, and Claude. They run scheduled prompts at scale, log mentions and citations, surface competitor share-of-voice, and chart trends over time. Examples include:

- Otterly.AI

- Profound

- GenRank

- Peec.ai

For teams focused heavily on AI visibility, this is usually the cleanest setup.

SEO Suites With AI Visibility Modules

Platforms like Ahrefs (Brand Radar), Semrush, and SE Ranking have added ChatGPT tracking to their existing platforms.

The biggest advantage is operational simplicity. SEO and AI visibility tracking live in one place. The trade-off is depth. Some platforms handle AI tracking well. Others still feel early.

Brand Monitoring and PR Platforms With AI Lenses

PR and media monitoring platforms are also expanding into AI visibility tracking. Tools like Meltwater now layer AI monitoring on top of:

- Media listening

- Social tracking

- PR reporting

This works best for teams already operating inside larger monitoring systems. If AI tracking is your only goal, dedicated tools are usually more efficient.

Tip: Three questions to ask any AI tracking vendor before you sign.

1) How many prompt runs do they execute per tracked prompt?

Anything under 10 samples per reporting cycle is usually too small to overcome response variance. Twenty or more runs per prompt each week produces much more reliable trend data.

2) Do they track mentions and citations separately?

If a tool only counts URL citations, it’s missing in-text mentions, and most ChatGPT references are mentions, not links.

3) How transparent is the methodology?

Ask vendors:

- How do they sample prompts

- When they refresh prompts

- How they handle ChatGPT’s response variance

- How they measure the visibility percentage

The teams running rigorous tracking will explain it. The teams running thin tracking will dodge.

How to Read the Numbers Without Fooling Yourself

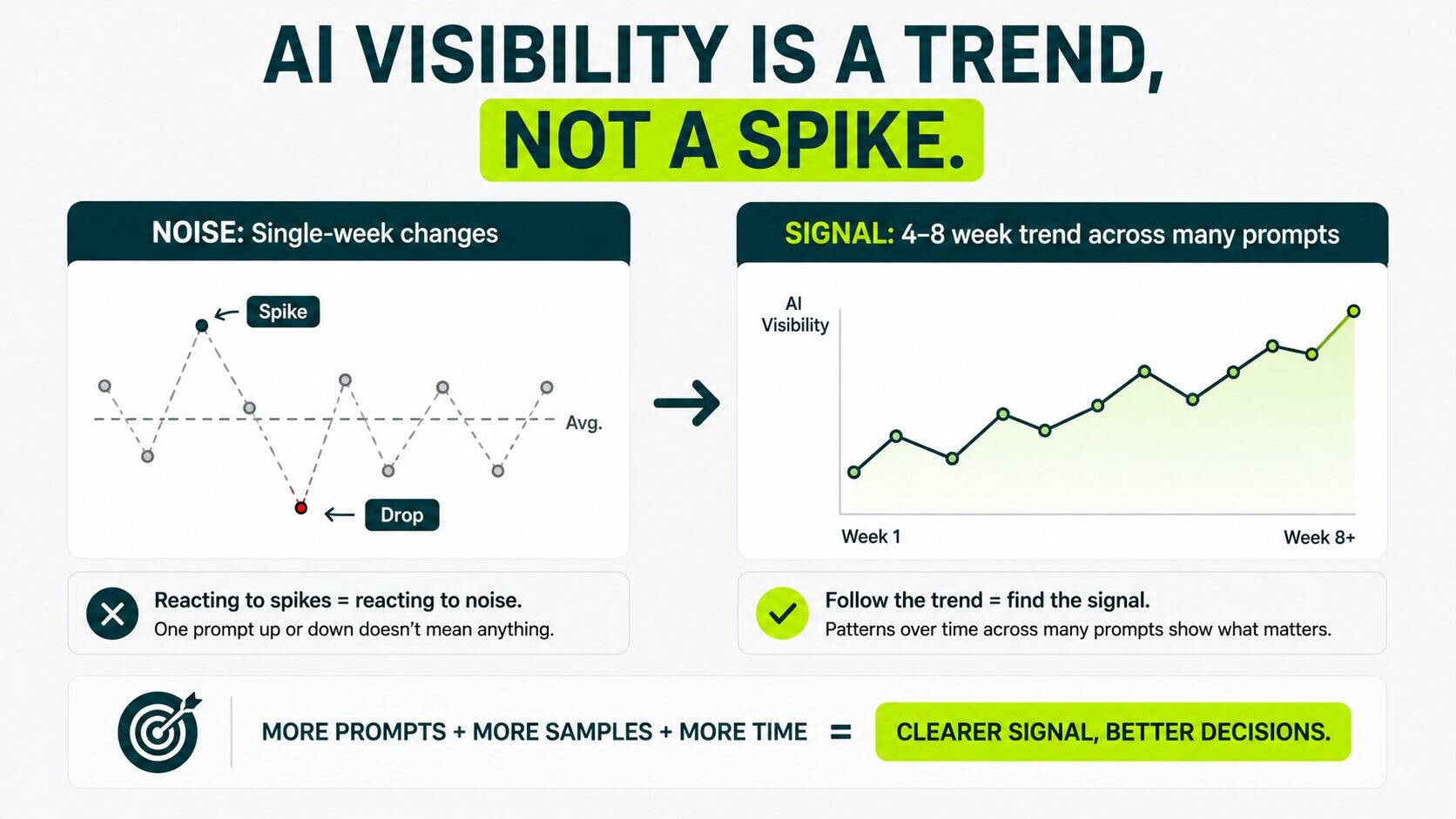

ChatGPT outputs are noisy by design, and small week-over-week changes in your visibility numbers are usually noise, not signal. The pattern that matters is the four-to eight-week trend across many prompts, not the spike of any single one.

A few rules for staying honest with the data:

Aggregate Before You Diagnose

Don’t react to a single prompt’s number. Look at the average across:

- Your full prompt set

- Individual prompt categories

- Visibility trends over time

One prompt dropping is usually noise. Multiple category-discovery prompts dropping together is a stronger signal that something actually changed.

Treat Sample Size Like a Research Question

Small sample sizes create weak conclusions. If a tool runs only five samples per prompt each week, the trend line remains unstable. Twenty samples per week produce far more reliable visibility data over time. More samples reduce randomness. That matters more here than most teams realize.

Check the Source List, Not Just the Answer

When visibility drops, look at what ChatGPT is citing instead. Sometimes the answer changes because:

- New comparison articles appeared

- Competitor reviews gained visibility

- Industry publications shifted

Those are actionable findings. If the sources are the same and only the answer changed, it’s just variance.

Beware “Ranking Position” Charts

Some tools show ‘you ranked 3rd in 60% of responses’ or something like that. Position numbers in ChatGPT aren’t stable enough to track. The order changes more than the brand list. Treat position as context, not as a KPI.

Watch for Accuracy Drift, Not Just Visibility

High visibility alone is not enough. A brand appearing in 80% of responses with inaccurate positioning may be in worse shape than one appearing in 50% of responses with strong, accurate framing. Here, you need to pay attention to:

- Outdated descriptions

- Incorrect pricing references

- Missing features

- Misleading positioning

Accuracy influences trust just as much as visibility does.

We’ve also seen sudden visibility drops that initially appeared alarming but were traced to source changes rather than actual shifts in model preference.

In one audit, a publisher removed comparison pages that ChatGPT had been citing heavily, and the decline in visibility followed within weeks.

What to Do With What You Find

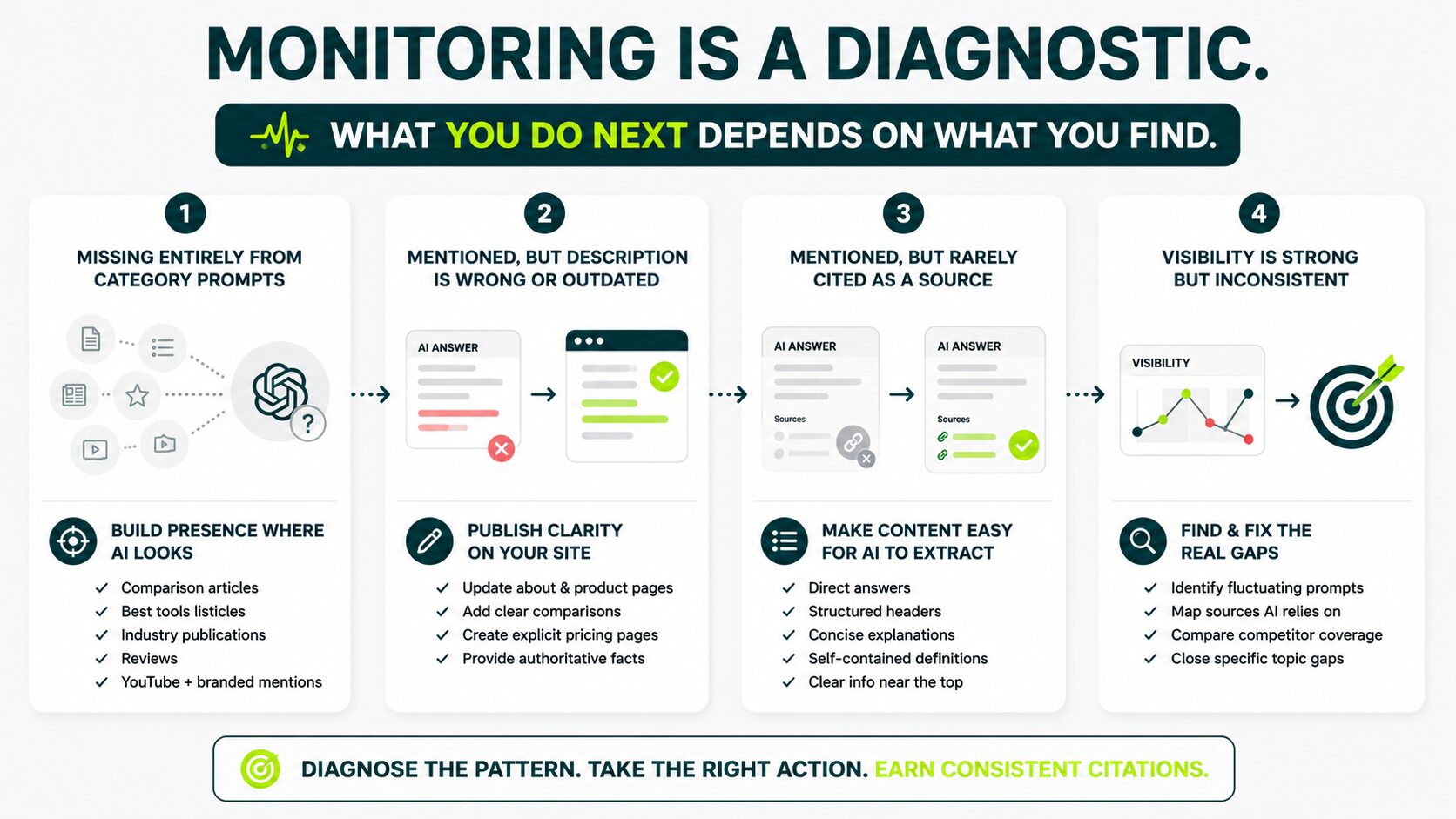

Monitoring is a diagnostic. What you do next depends on what you find.

If You’re Missing Entirely From Category Prompts

The issue is usually a weak presence across the third-party sources ChatGPT trusts. Improving this often requires strategic brand mention campaigns across industry publications, reviews, and comparison content

Ahrefs analyzed 75,000 brands and found that YouTube mentions and branded web mentions show the strongest correlation with AI brand visibility across ChatGPT, AI Mode, and AI Overviews.

If your brand is absent from trusted external discussions, AI systems struggle to consistently surface you.

If You’re Mentioned, But The Description is Wrong or Outdated

Publish authoritative structured content on your own site that clarifies the fact that ChatGPT is getting wrong. Update your about page, refresh product pages, and publish an explicit comparison or pricing page if those are the points of confusion. ChatGPT updates its understanding as fresher, clearer sources accumulate.

If You’re Mentioned But Rarely Cited as a Source Link

AI systems may struggle to extract information from your pages.

ChatGPT tends to favor pages with:

- Direct answers

- Structured headers

- Concise explanations

- Self-contained definitions

- Clear information near the top of the page

Audit important pages for extractability, not just SEO optimization.

If Your Visibility is Strong But Inconsistent

This usually means the foundation is working, but visibility still depends too heavily on certain prompts or source clusters.

You need to identify which prompts fluctuate most, which external sources ChatGPT relies on for those prompts, and where competitor coverage is stronger than yours.

Fixing specific topic gaps is usually more effective than rebuilding your entire content strategy.

Run the Baseline This Week

Open ChatGPT in an incognito window and run five category-discovery prompts. These are the prompts real buyers use early in their research process. Run each prompt three times and track whether your brand appeared, where it appeared, and which sources were cited. This will take around 30 minutes, and by the end of it, you’ll understand more about your actual AI visibility than most companies in your category currently do.

The teams that move ahead in 2026 set up a baseline, ran it consistently, and acted on the gaps the data exposed.

For a deeper breakdown of how broader brand mentions influence AI-generated answers, our full guide on brand mentions in SEO walks through what to build once your monitoring system has shown you where you’re invisible.

Want to understand where and how your brand is being mentioned online?

Get a clear approach to track visibility signals that influence search performance.

How often should I monitor brands mentioned in ChatGPT?

Weekly tracking is the sweet spot for most teams. ChatGPT’s underlying behavior and source pulls shift often enough that monthly is too slow to spot real movement, and daily creates too much noise to mask the trend. Run tracking weekly and evaluate trends over four to six weeks.

Can I track ChatGPT mentions with Google Analytics?

Partially. GA4 can capture referral traffic from ChatGPT when users click links cited by the AI. That helps measure downstream traffic. What it does not show is how often your brand appears in answers, how frequently you are mentioned without clicks, and visibility trends across prompts.

Can link building influence AI visibility?

Yes. In many industries, stronger editorial mentions and authoritative backlinks improve the external signals AI systems rely on. This is why many companies work with a specialized link building agency to improve both search and AI discovery signals.

Why does ChatGPT give different answers when I run the same prompt twice?

ChatGPT responses are probabilistic. Each response is one possible output generated from training data, prompt context, retrieval systems, and model weighting. Variance is part of how large language models work. That is exactly why visibility percentage across many prompt runs matters more than any single response.

Is monitoring ChatGPT different from monitoring AI Overviews or Perplexity?

Yes, each platform uses different systems, source weighting, and answer structures. For example, ChatGPT blends training data with selective web retrieval; Perplexity is heavily citation-focused; and Google AI Overviews rely heavily on the traditional Google ranking systems. A complete monitoring strategy tracks these systems separately.

How do brands improve visibility across AI search platforms?

Improving visibility across ChatGPT, AI Overviews, Perplexity, and similar systems usually requires a broader AI search optimization strategy focused on authoritative mentions, structured content, and trusted third-party sources.

Do I need a paid tool to monitor ChatGPT mentions?

Not initially. A manual baseline audit is enough for most teams during the first few months. Paid platforms become useful when you need larger prompt sets, ongoing weekly monitoring, competitor visibility comparison, and long-term trend reporting.

Will improving ChatGPT visibility take traffic from Google?

Usually no. Recent clickstream research suggests that ChatGPT often serves as a discovery layer before users continue their research on Google. In practice, AI visibility and traditional search visibility increasingly support each other rather than compete. Improving one often strengthens the other.

How is ChatGPT visibility connected to generative search?

ChatGPT visibility is becoming part of a broader generative search strategy. As AI systems increasingly shape how users discover brands, improving visibility across AI-generated answers matters alongside traditional SEO.